Table of contents

March 10, 2026

AI and Privacy: How Artificial Intelligence Uses Your Data

Artificial intelligence is becoming a normal part of daily life. People use AI to write emails, summarize documents, answer questions, help with homework, and even assist doctors in diagnosing diseases. These tools save time and make many tasks easier.

But there is an important reality behind this convenience: AI systems work by analyzing large amounts of data.

Every time we interact with an AI system—by typing a prompt, uploading a document, or asking a question—we are providing information that helps the system function. In many cases, users do not realize how much information they are sharing.

This creates an important question for individuals and organizations alike:

How does AI affect our privacy?

To answer that, we first need to understand something important.

AI systems often feel like private assistants, but the reality is a bit different.

Do AI Tools Collect Your Data?

AI tools often feel like private assistants. When you type a question into an AI chatbot or ask it to help with a task, the experience feels similar to having a one-on-one conversation.

However, AI systems are not private assistants. They are large computer systems trained on vast datasets.

To work effectively, AI models must learn from large amounts of text, images, or other information. This training helps the system recognize patterns and generate useful responses.

Because of this, AI systems depend heavily on data. The more data available to them, the better they usually perform.

This is why AI services often collect information such as:

- User prompts or questions

- Uploaded documents

- Interaction patterns

- Feedback from users

This does not necessarily mean every interaction is permanently stored.

However, it shows that AI systems rely on continuous data inputs to improve and function effectively.

So, what exactly happens to the information we enter into an AI system?

What Happens to Your Data When You Use AI?

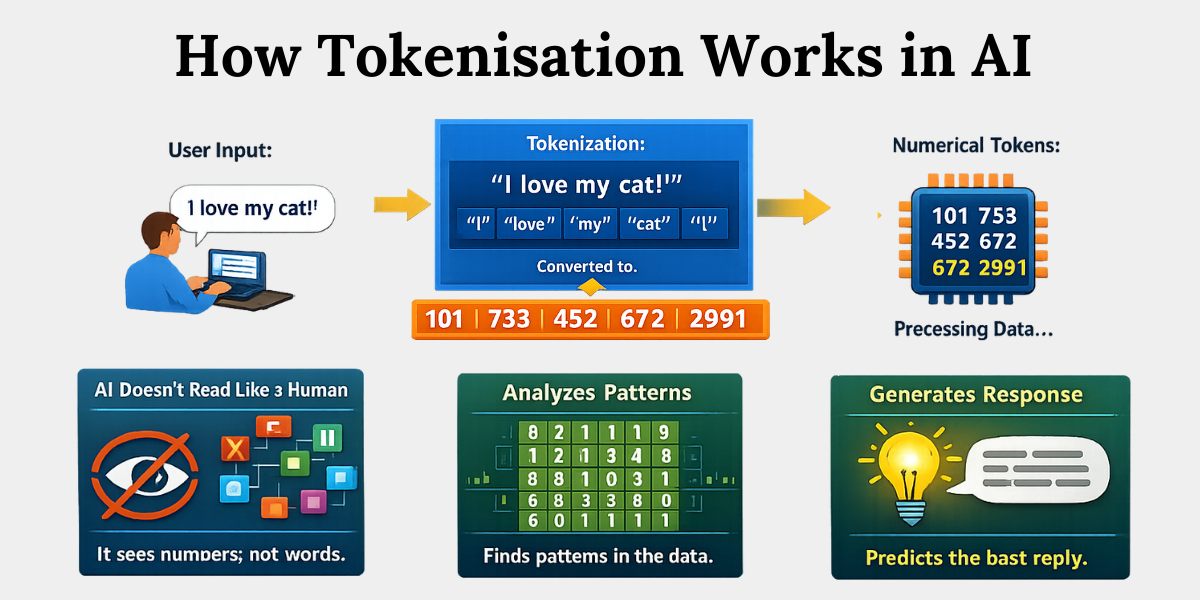

When you type something into an AI tool, the system first converts your words into numerical values that a computer can process. This process is called tokenization.

Tokenization breaks sentences into smaller pieces called tokens. These tokens represent parts of words or phrases in numerical form.

The AI model then analyses these tokens using a large network of mathematical calculations. These calculations rely on model parameters, often called weights, that the system learned during training.

In simple terms:

- The AI does not read text like a human.

- It processes numbers that represent pieces of language.

- It uses patterns learned from training data to predict the best possible response.

Importantly, AI systems typically do not store conversations the same way databases store files. Instead, they generate answers based on patterns learned during training.

This possibility is one reason privacy experts pay close attention to how training data is collected and used.

But does this mean AI is a threat to our privacy?

Is AI a Threat to Privacy?

Artificial intelligence can create privacy risks because it analyzes large datasets to find patterns about people and their behavior.

Even if a person does not directly share sensitive information, AI systems can sometimes infer information from patterns in other data.

For example, research has shown that data such as purchase history, location information, or browsing habits can reveal insights about:

- Health conditions

- Political preferences

- Lifestyle habits

- Personal interests

These insights are generated through data analysis and pattern recognition.

This means privacy concerns are not only about the information people intentionally share. They also relate to what AI systems can learn indirectly from data patterns.

At this point, many people assume that privacy laws should be able to control these risks.

But regulating AI is not always straightforward.

Why Traditional Privacy Laws Struggle to Regulate AI Systems

Most privacy laws were written before modern AI systems became widely used.

These laws mainly focus on how organizations collect, store, and share personal data in databases.

AI systems operate differently.

Instead of simply storing data, AI models learn from data during the training process. The patterns learned during training become part of the model itself.

This creates challenges for certain privacy rules.

1. Right to deletion becomes difficult with AI models

For example, many privacy regulations give individuals the right to request deletion of their personal data. In traditional systems, organizations can remove that data from their databases.

However, if the information has already been used to train an AI model, removing it becomes much more complicated.

2. Data minimization conflicts with AI training needs

Similarly, privacy laws often encourage data minimization, meaning organizations should only collect the data they truly need.

But AI systems often require large datasets to achieve reliable performance.

Because of these differences, regulators around the world are now working on new frameworks specifically designed for AI.

Now that we understand the regulatory challenges, it helps to look at how AI is already affecting privacy in real life.

Let’s look at some everyday examples.

Real Examples of How AI Is Changing Everyday Privacy

Artificial intelligence is already used in healthcare, education, business tools, and consumer technology. These applications demonstrate both the benefits of AI and the privacy questions that come with it.

How AI Is Transforming Healthcare and Medical Research

AI is helping doctors analyze medical images such as X-rays and mammograms. In some cases, AI systems can detect patterns associated with diseases earlier than traditional methods.

Risk: Large Medical Datasets Raise Privacy Concerns

However, developing these systems requires large datasets of medical information, which raises important questions about how patient data is collected and protected.

How AI Tools Analyze Personal Communication

AI writing assistants are increasingly used in workplaces and schools. These tools can draft emails, summarize documents, or generate reports.

To perform these tasks, AI systems must analyze the text provided by users.

For example, if someone asks an AI tool to summarize a report, the system needs to process the entire document to understand its contents.

Risk: Sensitive Information May Be Exposed

This creates potential privacy concerns when documents contain sensitive personal or corporate information.

How Smart Assistants and AI Devices Collect Usage Data

Smart assistants, recommendation systems, and personalized services rely on user data to improve their performance.

For example:

- Streaming platforms analyze viewing habits to recommend shows.

- Shopping websites analyze purchase history to suggest products.

- Voice assistants process voice commands to respond accurately.

These systems improve user experience by learning from interaction data.

Risk: AI Systems Can Build Detailed Behavioral Profiles

However, over time they can also create detailed records of user behavior.

Beyond direct data collection, there is another important privacy issue to understand.

AI can sometimes learn information about people that they never intentionally shared.

AI Inference: How AI Can Predict Things About You

One of the lesser-known privacy concerns is AI inference.

AI inference refers to the ability of an AI system to draw conclusions or make predictions about a person based on patterns in available data, even if that information was never directly shared.

AI systems can sometimes predict personal traits based on patterns in data.

For example, analyzing browsing habits, purchasing patterns, or social media activity can reveal insights about a person's interests or lifestyle.

This means privacy risks do not only arise from what people intentionally share.

They can also arise from what AI systems learn from patterns in existing data.

So far, we have discussed how AI can affect privacy. This naturally leads to another important question:

What can individuals do to protect themselves?

Let’s explore some practical steps.

How To Protect Your Privacy While Using AI Tools

People can take several practical steps to reduce privacy risks when using AI tools. While AI technology cannot be completely avoided, responsible use can significantly reduce unnecessary exposure of personal information.

Here are some simple actions individuals can take.

1. Avoid Sharing Sensitive Information

Users should avoid entering sensitive information such as:

- Financial details

- Passwords

- Identification numbers

- Medical records

AI tools are designed for assistance and analysis, not for storing confidential data.

2. Review AI Privacy Settings

Many AI services provide settings that allow users to control how their data is stored or used.

For example, some platforms allow users to disable chat history or opt out of data being used for system improvement.

Reviewing these settings can help reduce data exposure.

3. Be Careful When Uploading Documents

Uploading documents to AI tools can be helpful for tasks such as summarizing reports or analyzing information.

However, documents may contain personal or confidential information.

Before uploading files, users should confirm that the content does not include sensitive data.

4. Understand the Platform’s Privacy Policy

Different AI providers have different policies about how user data is handled.

Some platforms may use interactions to improve their models, while others offer stricter privacy protections.

Reading these policies helps users understand how their information may be used.

5. Use AI Tools Responsibly

AI tools are designed to assist with tasks and information processing.

Using them carefully and avoiding unnecessary sharing of personal details helps reduce privacy risks.

Conclusion

Artificial intelligence is one of the most powerful technologies shaping our future. It can improve healthcare, increase productivity, and make knowledge more accessible than ever before.

But the same technology also depends on large amounts of data, which raises important questions about how our personal information is used and protected.

As AI becomes a bigger part of our daily lives, the challenge is not to reject it—but to use it responsibly. The real goal is to find the right balance, where we benefit from AI’s convenience without losing control over our privacy.

The future of AI should not only be intelligent. It should also be responsible and respectful of personal privacy.

Related Blog

- https://www.privacyglobal.org/blog/indias-data-privacy-law-evolution-dpdp-act-2023/

- https://www.privacyglobal.org/blog/difference-between-personal-and-sensitive-data/

- https://www.privacyglobal.org/blog/types-of-sensitive-data-dpdp-act-india/